|

Young-Chae Son Welcome! I'm Young-Chae Son, a Ph.D. researcher at Dongguk University’s Interactive Robotics Lab, supervised by Prof. Soochul Lim. My research is centered on Physical AI, specifically focusing on building embodied foundation models that bridge high-level reasoning with low-level robotic control. I develop Vision-Language-Action (VLA) frameworks that integrate multimodal signals—such as visual, thermal, and physical interaction data—to enable robots to perform complex tasks in dynamic, real-world environments. Currently, I am investigating task-aware selective perception and autonomous planning to improve the efficiency and generalizability of robotic manipulation. This site contains my research projects, publications, and academic profile. |

|

ResearchI'm interested in computer vision, vision-language models, and robotic planning. Most of my research focuses on inferring physical awareness for robotic decision-making. |

|

Young-Chae Son, Jung-Woo Lee, Yoon-Ji Choi, Dae-Kwan Ko, Soo-Chul Lim* under review A dynamic information fusion framework that selectively processes only task-relevant camera views. |

|

|

Young-Chae Son, Dae-Kwan Ko, Yoon-Ji Choi, Soo-Chul Lim* IEEE Robotics and Automation Letters, 2026 Temperature-Aware Framework for Robotic Perception and Decision-Making |

|

|

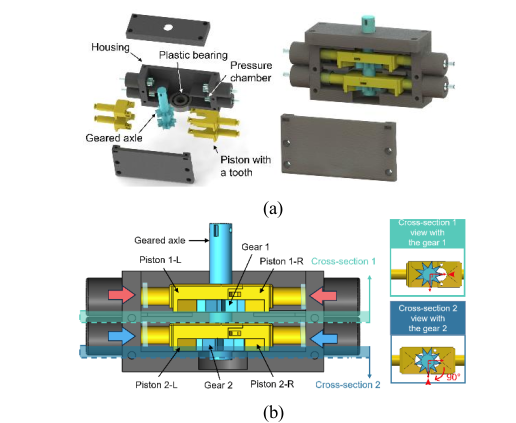

Young-Chae Son, Sung-Jung Joo, Po-Gyu Park, Changjin Yun, Soo-Chul Lim*, Hyung-Kew Lee* Conference on Precision Electromagnetic Measurements (CPEM), 2026 Development of a Nonmagnetic Pneumatic Stepper Motor for the Automatic Evaluation of Magnetic Field Uniformity in Helmholtz Coils. |

|

Young-Chae Son, DongHan Lee, Soo-Chul Lim* under review Robot task automation framework based on large language models (LLMs) that utilizes both visual and physical information. |

Experience & Activities |

|

Projects

|

Development of Intelligent Autonomous Manipulation Technology for Humanoids with Reduced Dependency on Real-World Data

Jul 2025 – Present

Robot Motion Generation AI based on Multimodal Vision/Tactile Information Driven by Language Model

Mar 2025 – Present

Collecting Large-scale Robot Manipulation Data in Physical Environment

Aug 2024 – Dec 2024

|

|

Patent

|

METHOD, APPARATUS, SYSTEM, AND COMPUTER-READABLE RECORDING MEDIUM FOR ROBOT CONTROL USING THERMAL IMAGE INFORMATION

Apr 2026

Korean Patent Application, No. 10-2026-0067117

|

|

Honors & Awards

|

Global Outstanding Talent Scholarship (Full Tuition Waiver for Ph.D.), Dongguk University

Sep 2025 – Present

Best Paper Award, BK21FOUR AIMS Center Winter Conference, Dongguk University

2026

Grand Prize (1st Place), Transfer Student Counseling Mentoring Testimonial, Tech University of Korea

2024

Grand Prize (1st Place), English Presentation Contest, Tech University of Korea

2024

|

|

Presentations

|

Presentation, "ThermoAct: Thermal-Aware Vision-Language-Action Models..."

Feb 2026

Locomotion and Manipulation Technology Research Group, KRoC, Pyeongchang

Presentation, "ThermoAct: Thermal-Aware Vision-Language-Action Models..."

Feb 2026

Robot Learning Research Group, KRoC, Pyeongchang

Poster, "High SNR and High Frame Rate Analog Front-End ICs..."

Jul 2022

Europe Korea Conference on Science and Technology (EKC), Marseille, France

|

|

Teaching

|

Teaching Assistant, Autonomous Robotics

Spring 2026

Teaching Assistant, Autonomous Robotics

Spring 2025

Teaching Assistant, Computational Mechanics

Fall 2024

Teaching Assistant, Autonomous Robotics

Spring 2024

Teaching Assistant, Fluid Mechanics

Fall 2023

|

|

|